Computational Intelligence & Photography Lab | Advisor: Seonjoo Kim

Graduate Researcher - (Sep. 2025 – Present)

Multimodal Intelligence Research Lab | Advisor: Youngjae Yu

Research Intern - (Dec. 2024 – August. 2025)

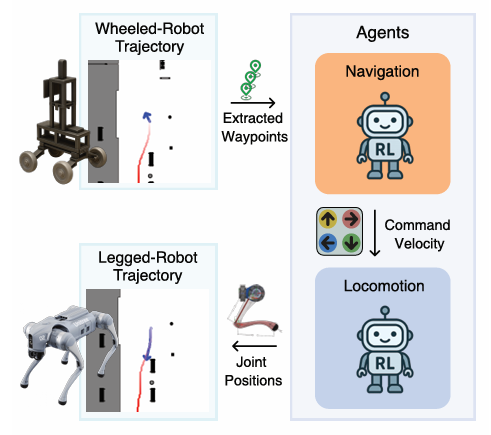

XNav-Pipe: Cross-Platform Robot

Navigation Data Generation Pipeline

Sungwoong Kim, Minseo Kim, Junhee Park, Siyeol Kim, Jihwan Yu

-

Reconstructed the CANVAS (previous work) VLA pipeline and redesigned the

architecture based on Qwen2.5-VL.

- Integrated real-time communication with Unitree Go2 via ROS2 topics, using

camera and sensor (coordinate) inputs for multimodal processing.

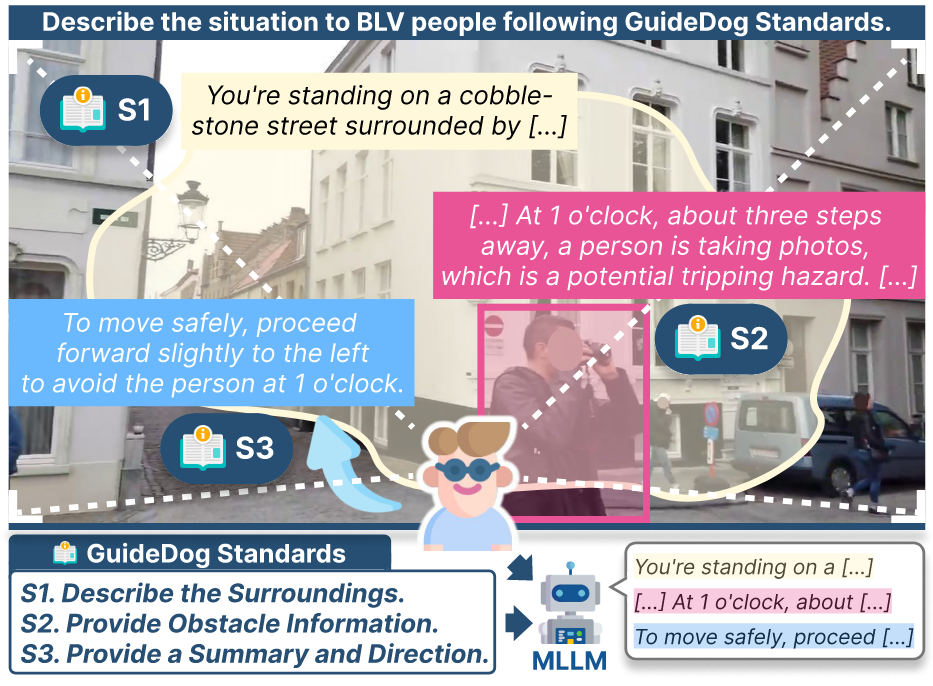

GuideDog: A Real-World Multimodal Dataset for Blind and Low-Vision

Accessibility-Aware Guidance

Junhyeok Kim, Jaewoo Park, Junhee Park, Sanyeol Lee, Jiwan Chung

- Distilled global BLV association guidelines into a structured, machine-readable

format for VLM integration.

- Built and managed Label Studio pipelines for high-quality description

generation,

including IAA validation.

XL8.ai | U.S.

Headquarter

AI Research Intern - (Oct. 2023 – Apr. 2024)

AI-driven media localization with context-aware translations

- Optimized ParaCrawl Dataset: Filtered high-quality translation data using LaBSE

with language-specific thresholds, automating the process and uploading to AWS

S3.

- Enhanced Translation Accuracy: Improved translation accuracy for German,

Portuguese, and Vietnamese by incorporating number word augmentation and adding

targeted test cases.

- Integrated Translation Evaluation: Combined translation codes from XL8, Google,

and DeepL, evaluating with BLEU, COMET, and MetricX23.

- Developed Transliteration Module: Integrated English-to-Korean and Japanese

transliteration modules based on comparative analysis.

RealSmart Corporation

Software Engineer - (Nov. 2020 – Dec. 2021)

OMR-based testing, survey analysis, and smart tools for education and

business

- Developed the WordTEST Android app using JAVA and MSSQL with optimized DB

performance.

- Managed and maintained 90+ MSSQL databases, performing performance tuning,

backups, and security operations.

- Refined a VSTO-based reporting program widely adopted in education and

enterprise

sectors.

- Enhanced a Windows application used by 500+ academies, 200+ universities, and

300+

companies including Samsung and Seoul National University.